Capturing Claude's Autonomous Agent Mode: A Deep Dive into Dispatch

I let Claude plan a 6-hour Japan trip in Cowork mode. Then I tried to replay it — and vibe-replay couldn't see the session at all. Here's what it took to fix that.

Stories, insights, and updates from building with AI.

I let Claude plan a 6-hour Japan trip in Cowork mode. Then I tried to replay it — and vibe-replay couldn't see the session at all. Here's what it took to fix that.

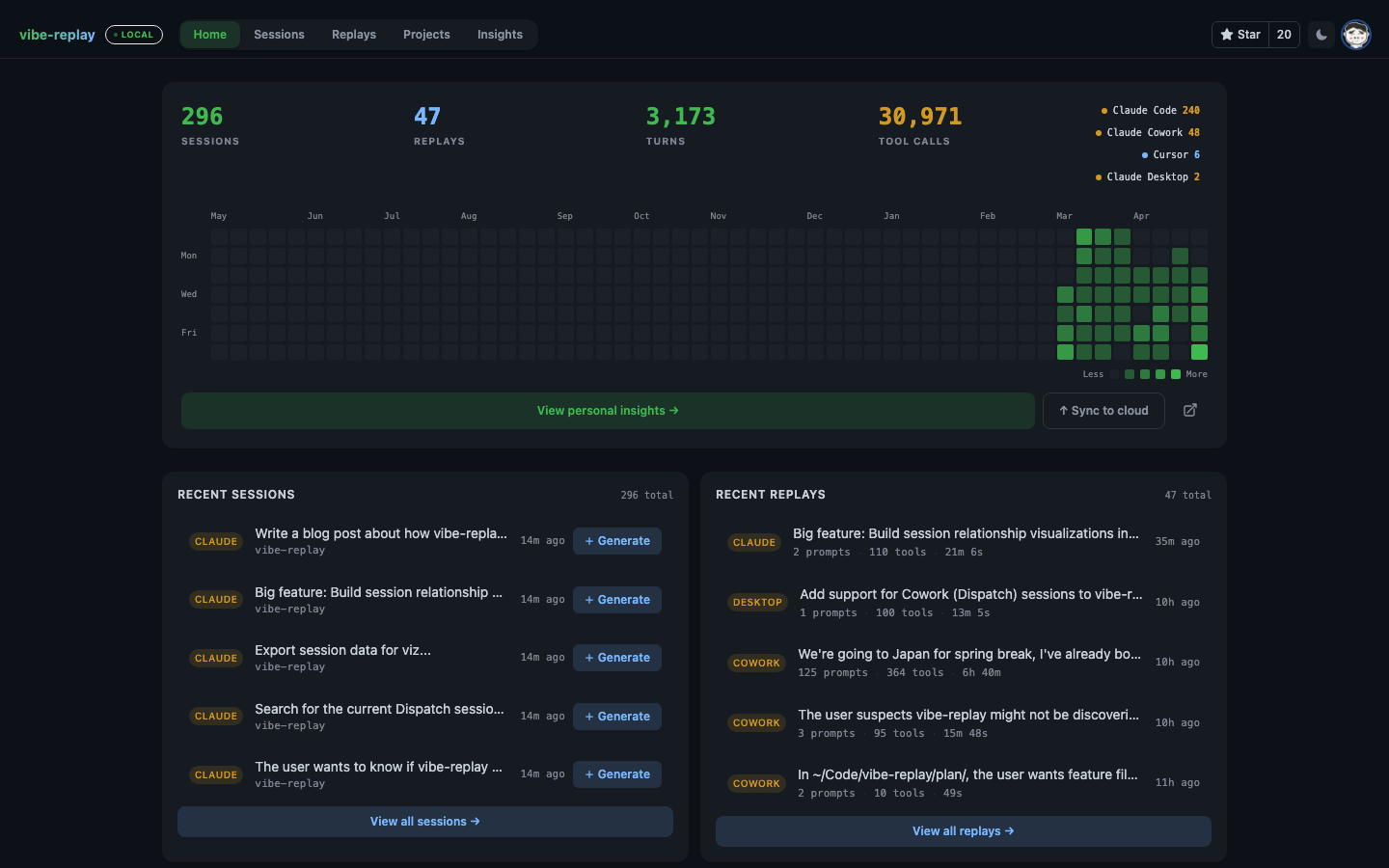

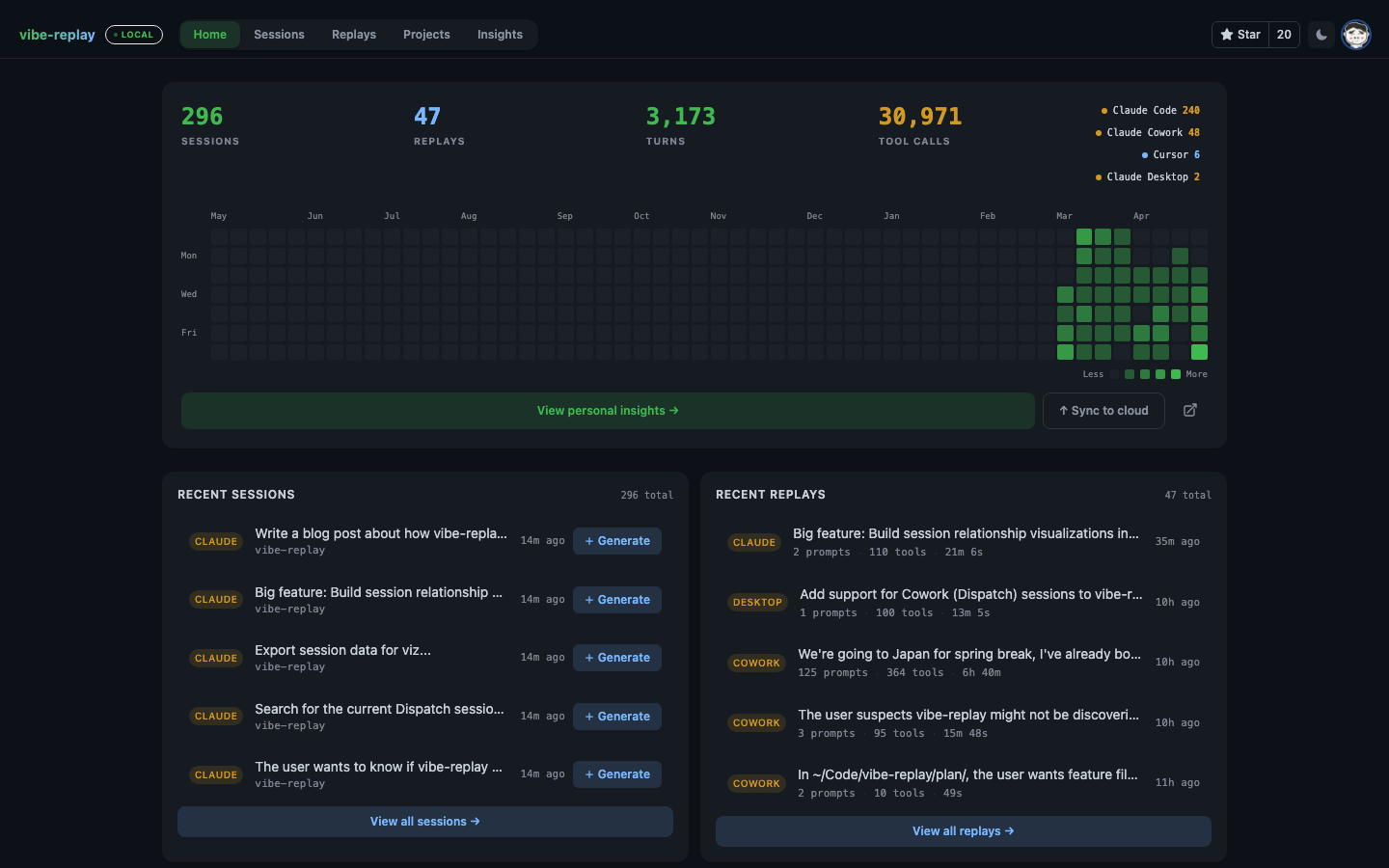

vibe-replay now tracks your AI coding activity across machines and tools. GitHub-style heatmaps, streak tracking, model usage breakdowns — and shareable public profiles.

We ran the built-in /simplify command on our own repo and captured every file read, agent spawn, and fix as an interactive replay. Here's what we learned by watching the black box.

513,000 lines of TypeScript, extracted from a source map left in the npm package. We found undocumented JSONL fields, hidden metadata, and MCP tool naming conventions — then turned them into product improvements.

2.1 GB in ~/.cursor/, another 4.9 GB in Application Support, and a 1.2 GB state.vscdb. Cursor's local data is everywhere: transcripts, SQLite chat stores, global state blobs, checkpoints, and editor history.

858 MB in three weeks. Every prompt, every tool call, every file edit — all stored as plain text in ~/.claude/. Here's what's inside.

I gave Claude Code 8 prompts to create vibe-replay, an open source tool that turns AI coding sessions into interactive replays. 53 minutes, 447 tool calls. Watch the entire process unfold.